Understanding Context Windows: A Critical Concept for AI Product Managers

The Case of the Disappearing AI Memory

Last week, one of my product management students came to me with a puzzling story.

"I spent over an hour working with an AI assistant on a complex problem," he explained, visibly frustrated. "We went back and forth, building on previous information and refining our approach. But suddenly, the AI started giving inconsistent answers that ignored all our earlier work. I was about to give up when I had a hunch—I opened a fresh chat in a new browser tab and asked my question again, providing all relevant context upfront. Immediately, I got a perfect, coherent response."

What my student had stumbled upon wasn't a bug or a mysterious AI phenomenon. He had bumped up against the model's context window limits.

As AI technologies continue to reshape product development across industries, product managers must develop fluency in key technical concepts that impact product design, capabilities, and limitations. Among these concepts, the context window is critical yet often misunderstood. Let's dive into what context windows are, why they matter for your AI products, and how to work with them strategically.

What Is a Context Window?

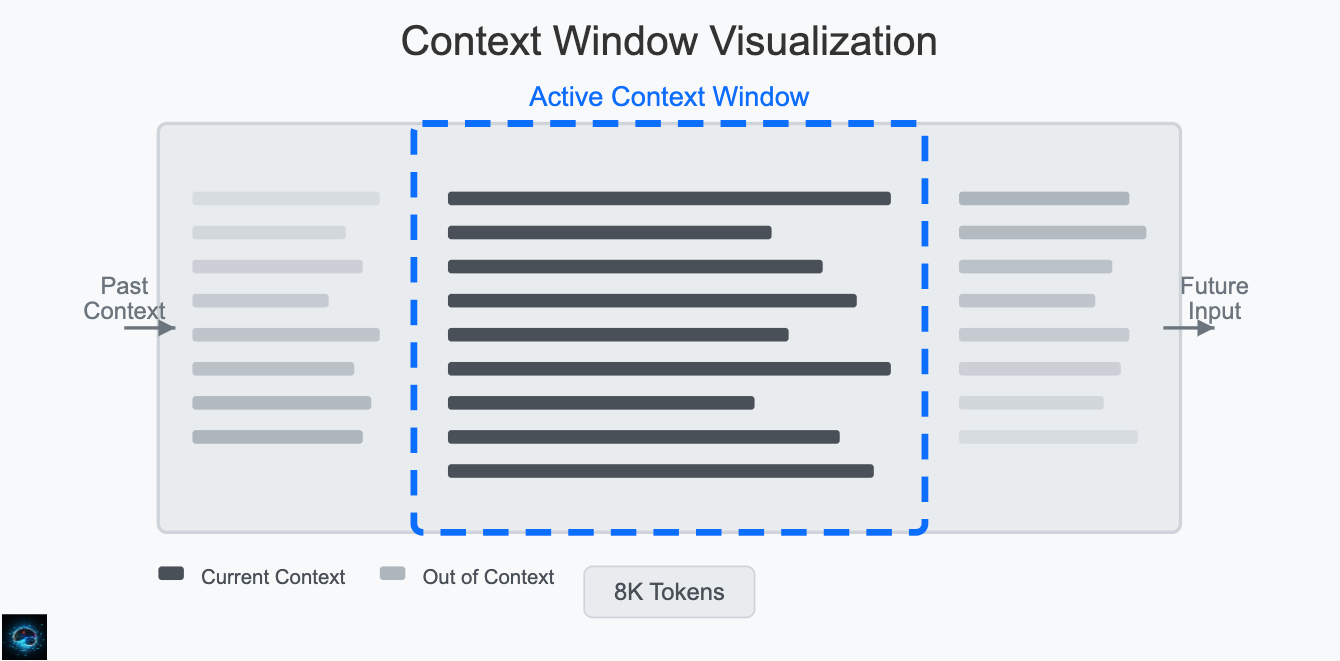

At its core, a context window refers to the amount of text (tokens) that a large language model (LLM) can consider simultaneously—both in terms of the input it receives and the output it generates. Think of it as the model's "working memory" or the conversation history it can access when formulating responses.

For example, when you prompt ChatGPT or Claude with a question, the model doesn't consider that single question in isolation. It considers:

Your current question

Previous exchanges in the conversation

Any additional context you've provided

System instructions that define its behavior

This information must fit within the model's context window to be processed.

Why Context Windows Matter for Product Managers

As an AI product manager, understanding context windows impacts several critical aspects of your product:

1. Product Capabilities and Use Cases

The size of your model's context window directly determines what applications are possible:

Context Window Size Potential Applications Small (2K-4K tokens) Simple Q&A, brief content generation, basic chatbots Medium (8K-16K tokens) Document summarization, more complex conversations, moderate content analysis Large (32K-100K+ tokens) Entire document analysis, book summarization, long-form content generation, complex reasoning across extensive data

2. User Experience Considerations

Context windows influence how users interact with your product:

Conversation Persistence: How much conversation history can your AI remember?

Document Processing: Can users upload entire documents for analysis, or must they be chunked?

Response Completeness: Will your AI have enough context to provide comprehensive answers?

3. Technical Architecture Decisions

The context window affects your overall system design:

Memory Management: How will you handle conversations that exceed the context window?

Retrieval Augmented Generation (RAG): Will you need to implement external knowledge retrieval?

Fine-tuning Strategy: Should you optimize prompts for context efficiency?

4. Cost and Resource Allocation

Larger context windows typically mean:

Higher computational requirements

Increased latency (response time)

Higher operational costs

Greater energy consumption

Recent Advancements in Context Windows

The landscape of context windows is rapidly evolving:

2020: GPT-3 launched with a 4K token context window

2022: ChatGPT initially had an 8K token window

2023: Claude expanded to 100K tokens

2024: Several models now support 200K+ token windows

Future: Research continues extending context windows even further

Strategic Considerations for AI Product Managers

When designing products with context windows in mind, consider these strategies:

1. Conduct Context Window Requirement Analysis

Ask yourself:

What is the typical length of input your users will provide?

How much conversation history is necessary for your use case?

What are the tradeoffs between context length and response time?

2. Implement Contextual Memory Management

Consider:

Conversation Summarization: Periodically condense conversation history

Key Information Extraction: Identify and preserve critical details

External Memory Systems: Store information outside the model

3. Optimize Prompt Engineering

Develop techniques to:

Craft concise, information-dense prompts

Prioritize the most relevant information

Eliminate redundant context

4. Design for Context Limitations

Create user experiences that:

Set clear expectations about memory limitations

Provide mechanisms for users to manage context

Include interfaces for uploading, analyzing, and referencing external content

Real-World Example: Document Analysis Product

Let's consider how context window considerations might play out in developing an AI document analysis product:

Challenge: Legal teams need to analyze 100+ page contracts, but most standard models have context windows that can handle only 20-30 pages.

Context Window Solutions:

Document Chunking: Break documents into semantic sections

Hierarchical Processing: Analyze each section, then synthesize findings

Query-Focused Extraction: Pull only relevant passages for specific questions

Premium Tier: Offer larger context window models for complex documents

Key Takeaways for AI Product Managers

Context windows fundamentally shape what your AI product can and cannot do. It is crucial to understand these limitations early in the product development process.

Users rarely understand context window limitations. Your product design must account for and manage these constraints invisibly when possible.

Context window size is often a trade-off against cost and speed. Make strategic decisions about where your product needs to optimize.

Creative architectural solutions can overcome context limitations. Consider RAG, chunking strategies, and memory management.

The context window landscape continues to evolve rapidly. Stay informed about new capabilities and be prepared to adapt your product strategy.

What's Next in Context Window Evolution?

As an AI product manager, keep an eye on these emerging trends:

Efficient attention mechanisms that reduce computational demands of larger windows

Long-term memory systems that persist beyond individual sessions

Adaptive context management that dynamically allocates context based on task complexity

Multi-modal context handling that incorporates images, audio, and other data types

Understanding the technical aspects of context windows while translating them into product implications is a core skill for effective AI product management. Mastering this concept will equip you to design AI products that deliver exceptional value while working within technical constraints.

Discussion Questions

How are you currently managing context window limitations in your AI products?

What creative solutions have you implemented to overcome context constraints?

How do you effectively communicate these technical limitations to stakeholders and users?

Share your thoughts and experiences in the comments below!